Sparse-View 3D Gaussian Splatting in the Wild

A sparse-view 3D synthesis framework for unconstrained real-world scenes, leveraging reference-guided diffusion refinement and sparsity-aware Gaussian enhancement to improve rendering quality and robustness.

Wongi Park1, Jordan A. James2, Myeongseok Nam3, Minjae Lee4, Soomok Lee5, Sang-Hyun Lee1, and William J. Beksi2

1Ajou Univerity 2University of Texas at Arlington 3GenGenAI 4Seoul National University 5Kennesaw State University

Abstract

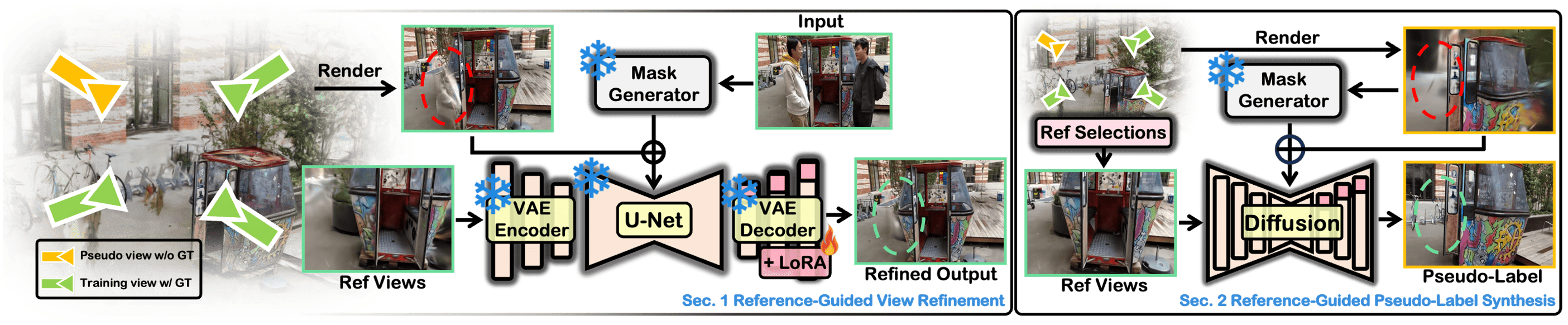

We propose a 3D novel sparse-view synthesis framework for unconstrained real-world scenarios that contain distractors. Our work contrasts with previous approaches that either perform novel view synthesis from a set of constrained images without transient elements, or that leverage dense image collections to enhance the 3D representation with distractors. To do this, we introduce reference-guided view refinement with a diffusion model, conditioned on a transient mask and a reference image, to enhance the 3D representation and mitigate artifacts in rendered views. Furthermore, we address sparse regions in the Gaussian field via pseudo-view generation along with a sparsity-aware Gaussian replication strategy to amplify Gaussian density in the sparse regions. Extensive experiments on publicly available datasets demonstrate that our methodology consistently outperforms existing methods (e.g., PSNR - 17.2%, SSIM - 10.8%, LPIPS - 4.0%) and provides high-fidelity results. This advancement paves the way for realizing real-world scenarios without labor-intensive data acquisition.

Method in 30 seconds

Motivation: Why perform 3D reconstruction from sparse real-world scenarios?

Existing methods perform well with dense or clean sparse inputs, but struggle in real-world scenarios, where only a few images with distractors cause multi-view inconsistencies. We address the challenge of novel view synthesis under sparse unconstrained scenarios.

Unified Reconstruction method

We tackle novel view synthesis under sparse and unconstrained real-world sceanrios. Our approach uses a reference-guided diffusion model to refine views and reduce artifacts, along with sparsity-aware Gaussian replication to complete missing geometry, showing high-quality rendering results.

Results

Benchmark Performance: NeRF On-the-go

SaveWildGS establishes a new state-of-the-art for sparse-view synthesis in unconstrained environments. By integrating reference-guided refinement and sparsity-aware replication, it significantly outperforms traditional 3DGS and recent in-the-wild methods.

- 3DGS: PSNR 13.18 / SSIM 0.331

- WildGaussians: PSNR 19.72 / SSIM 0.675

- Difix3D+: PSNR 21.38 / SSIM 0.723

- SaveWildGS (Ours): PSNR 25.06 / SSIM 0.801

Our framework achieves an average improvement of +17.2% in PSNR and +10.8% in SSIM across unconstrained real-world datasets. It maintains high-speed rendering (110 FPS) while effectively handling diverse distractors.

Benchmark Performance: Photo Tourism

Evaluated on three landmark scenes, SaveWildGS demonstrates superior performance in handling large-scale unconstrained photo collections with varying appearances and distractors.

- WildGaussians: PSNR 14.73 / SSIM 0.412

- GS-W: PSNR 14.03 / SSIM 0.482

- SparseGS-W: PSNR 19.01 / SSIM 0.550

- SaveWildGS (Ours): PSNR 19.86 / SSIM 0.779

While SparseGS-W shows impressive results, it still struggles with distractors due to a lack of knowledge regarding transient elements. SaveWildGS significantly outperforms the state-of-the-art by specifically filling sparse regions and refining corrupted views using reference-guided masks.

Citation

If you find this work useful, please consider citing:

Coming Soon...